Discovery workshop toolkit to identify product and service opportunities

One of my passions as a designer is helping a business take stock of an existing part of a product or service in order to expose where value is leaking and where opportunity exists.

The outputs of a strong discovery rarely look the same. Opportunities can surface across multiple layers of the business, for example:

1. Poor user facing digital experience. For example, a missing account creation method or suboptimal navigation taxonomy.

2. An outdated interaction with customer services. For example outdated phone routing to get a customer to the right help department.

3. A gap in the marketing contact strategy. For example an email confirmation that’s not optimised to avoid the junk folder.

4. A missed touchpoint at a physical interaction. For example a missing ‘I’ve arrived’ triage at a GPs surgery.

The surface-level problems may look different, but they all come down to a misalignment, gaps or inefficiencies somewhere in a customer lifecycle.

Turning customer & user experiences opportunities into business value

A strong service design skillset helps a business apply a user-first lens to uncover and prioritise these issues. In many organisations, product and design remain minority disciplines within a technology-led environment, which means we often need to articulate impact more clearly than anyone else in the room.

The most effective way to do that? Translate experience gaps into commercial terms.

- Lost revenue

- Increased operational costs

- Wasted staff time

- Reduced capacity

- Increased support overhead

- Drop-offs in conversion

- Delays that compound downstream

When framed in pound sterling rather than sentiment, even the most sceptical C-suite detractor starts to pay attention.

From head-scratching, to a plan

If you ask a broad question like, “Where can we improve value across the business?”, you’ll receive a wide range of perspectives.

Some will be subjective. Some will be informed but siloed. Some will be anecdotal.

So this article will talk through the specifics of the set of activities and their outcomes I use in my discoveries to diverge by collecting all the ideas and insights, and then converge to narrow down where to focus first.

I recommend reading this article in sequence. It's designed as an end-to-end process and each step is important to the next one.

However the activities I cover can be equally useful in isolation in certain circumstances. Here's the ordered list incase you want to jump to a specific section - Journey / Service mapping, Empathy mapping, Data dump, How-Might-We statements, Hypothesis forming, Prioritisation, Rapid team ideation, Team dot voting.

Using AI in the process

In our recent article we created a directory of practical AI tools to accelerate different activities from right across the product development lifecycle. I’ll add my thoughts on using AI against each of the phases in the article and you can decide whether bringing AI into your process has value.

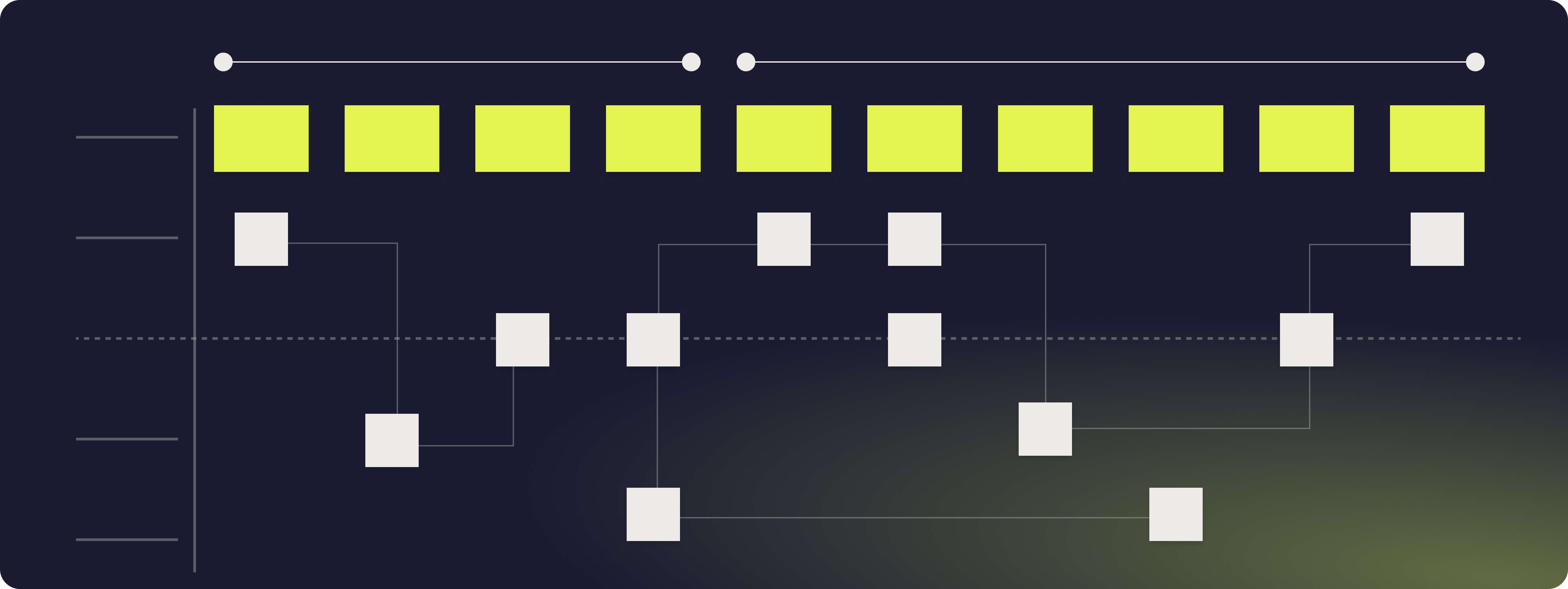

Journey mapping and service blueprinting

The first phase of discovery is about creating a single source of truth — a journey map or service blueprint that consolidates fragmented organisational knowledge into one coherent view of the service.

Every organisation holds its knowledge in siloes. Some stakeholders see the service horizontally, with a broad strategic view across multiple touchpoints. Others see it vertically, with deep expertise in one specific operational or technical layer.

A strong blueprint surfaces both perspectives and forces them into alignment. It captures both what the user sees and what the organisation must do to enable it.

It documents:

- User-facing touchpoints

- Frontstage interactions

- Backstage processes

- Supporting systems and technologies

A technical subject matter expert (SME) helps you map the “under the hood” architecture and dependencies. A product manager helps articulate the intent and experience from the user’s perspective. Operations might expose capacity constraints you didn’t anticipate.

The value lies in stitching these views together and you’ve created a tool to anchor your insights and findings against, as well as explore new ideas that may have previously been hidden.

As discovery progresses, the blueprint becomes the backbone of the process.

Observations, insights, friction points, hypotheses, and opportunity areas are layered onto it.

Rather than capturing insights in isolation, everything is anchored to a shared system view, preventing fragmented thinking and ensuring recommendations remain grounded in operational reality.

How could I use AI here?

If our Hyperact template isn't quite right for your use case, you could use an AI assistant from within your mapping tools (eg Miro) to help layout the specifics of your own. You could also use it to drill down to more granular phases and touchpoints based on your top-level information. I would avoid asking the AI to help with the mapping itself, this requires human level context from your SMEs.

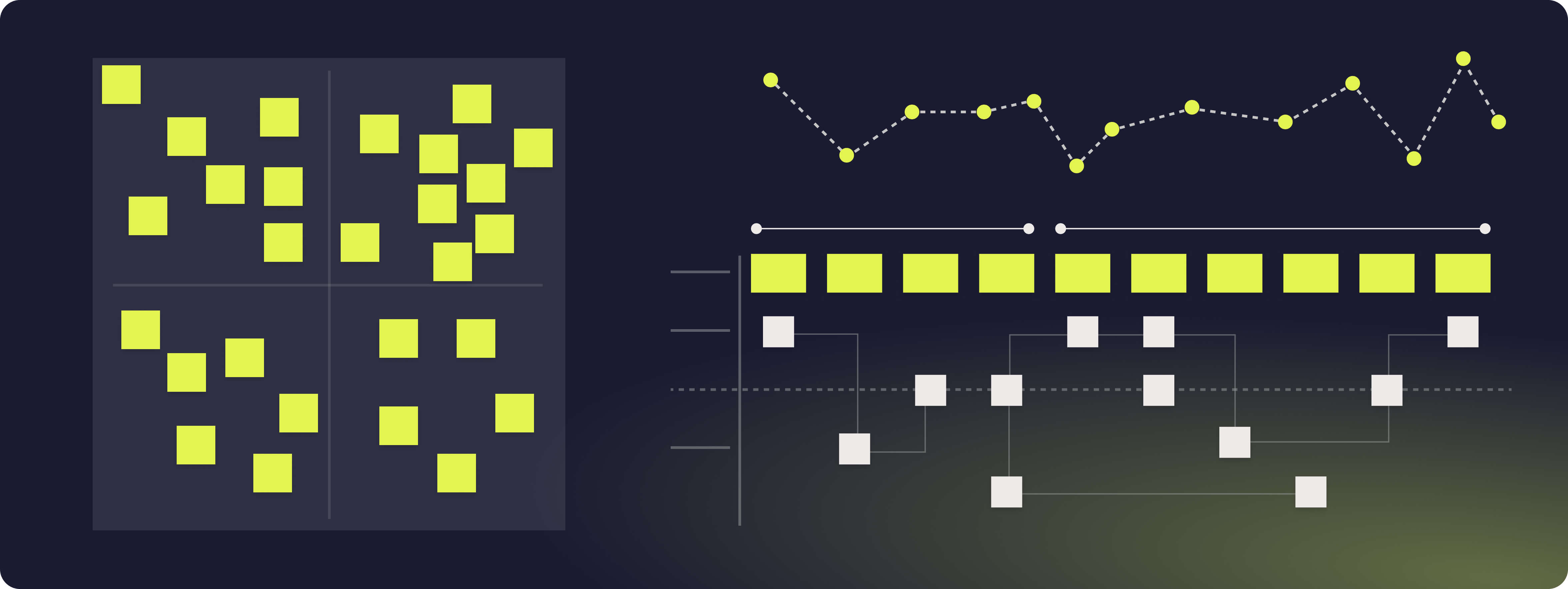

Empathy mapping

The next phase forces the team to put themselves in the users shoes.

I say “force” deliberately. In my experience, empathy mapping is met with scepticism at the start. It can feel like theatre, something soft and abstract that distracts from “real work”. But almost without exception, by the end of the session the same people acknowledge the shift in perspective it creates.

That shift is the point.

Empathy mapping is about surfacing the assumptions we hold about users and making them explicit. When facilitated well, it becomes a structured way of extracting rapid-fire insight from a cross-functional group and pressure-testing it collectively.

I’ve detailed this activity and value in detail in a previous Hyperact article 'A guide to empathy mapping' but, to summarise, the facilitator takes a cross functional group through an exercise to extract as many quick ideas from the users perspective across the following categories:

What is my user saying? (What emotions are they outwardly expressing to others) What is my user doing? (What physical manifestations of emotions might they display?) What is my user thinking? (What are they keeping to themselves) How is my user feeling? (What mental manifestations of emotions might they foster internally or display outwardly) What does my user have to gain from? What pains will my user encounter?

These are then sorted into themes and discussed as a group. This also gives a solid starting point to map any user emotions against the map created in the first activity, which adds further evidence for opportunities for improvement across the service.

How could I use AI here?

I would advise running your initial sessions with humans if you have access to people who know the product already, and like with the empathy mapping, give your AI as much context as possible about the problem space and ask it to run its own pass for say, do, think and feel to see if it helps further form your mental model for the users.

You can also use an AI to affinity sort your scattered post-its into categories if you don't have the headspace or the time to do this during the workshopping session.

Data dump

Once the journey map is established and user emotions have been layered onto it, the next step is to extract as much existing evidence as possible from the organisation. This is where discovery moves from perspective to proof. The goal in this phase is simple: surface everything the business already knows, whether that knowledge sits in dashboards, slide decks, archived research, or in the heads of analysts and product managers. Practically, this means working closely with product leads, user researchers, and data analysts to access: Quantitative dashboards and raw data extracts Focused, on-the-fly data pulls aligned to emerging questions Historic research recordings and usability sessions Pre-existing synthesis in documents and presentation packs

(Here’s a Hyperact article on unlocking your product data if you need some advice on improving your data internal maturity) The quality of your discovery will be directly proportional to how well you mine this layer.

One of the most valuable exercises in this phase is pairing directly with a product or data analyst. Together, you define the questions you want answered before any data is pulled. Their outputs then become the raw material for insight statements.

In some environments this can feel indulgent, analyst time is often stretched thin. But when you can create that space, the payoff is significant. Converting data into clear, decision-ready insights lays the groundwork for robust hypothesis formation in the next phase.

How could I use AI here?

You could give the AI the sources of data to help turn them into insight statements here. Caution is advised to make sure no hallucinations are present in the outputs. You could use LLMs that are better known for being less creative in this instance, such as NotebookLM. Where you can gain confidence in every response because NotebookLM provides clear citations for its work, showing you the exact sources.

An optional activity at this point is some competitor analysis to add more qualitative insights to your data points. Don’t forget - just because a company has decided to focus on a feature or part of their service doesn’t mean it’s right, so do your due diligence on emerging trends and industry standards.

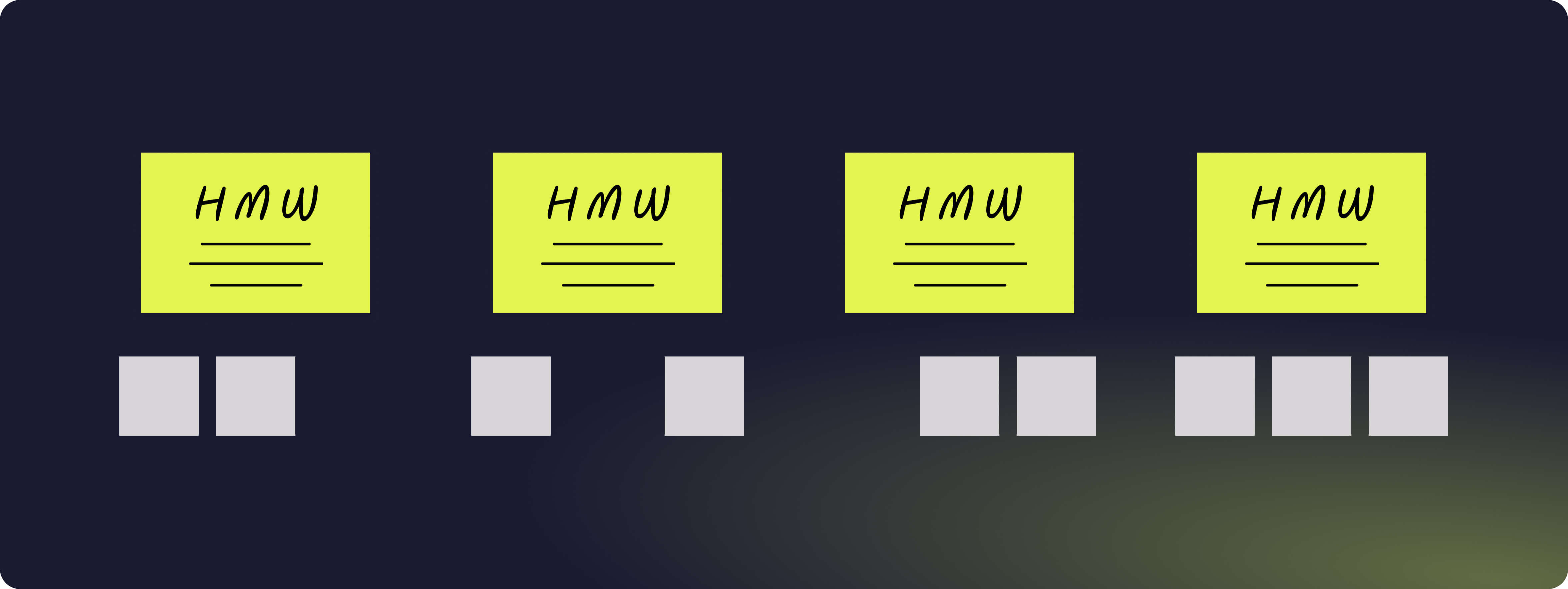

How-Might-We Statements

At this stage, we’ve mapped the system, layered emotion onto it, and grounded ourselves in evidence. The next move is to translate that material into structured opportunity space. This is where “How Might We” statements come in.

The purpose of this phase is to articulate your service problems clearly enough that they can anchor strong hypotheses in the next stage. A well-formed HMW captures intent without prescribing a solution.

The format is deliberately simple:

How might we [intended experience] for [user] so that [desired effect]?

The power lies in its openness. It invites possibility without assuming the answer.

For example:

- How might we reduce first-time friction for new users so that they reach their first moment of value within minutes?

- How might we create a sense of progress for casual users so that they feel motivated to return?

- How might we increase transparency for cautious customers so that they feel safe sharing their data?

At their best, HMWs balance ambition and specificity. They can be broad enough to open new directions, or tightly focused on a particular friction point uncovered in the journey mapping and data analysis. The earlier phases determine the quality of the statements here. Weak inputs lead to vague HMWs. Strong evidence leads to sharp opportunity framing.

Volume matters in this phase. The objective is divergence. Individuals generate their own statements first, then share them with the group. This isn’t about defending your idea; it’s about exposing it to others so it can spark better ones.

The first round often unlocks momentum. A second round — after hearing others’ thinking — typically produces stronger, more refined statements. In some cases, it’s valuable to allow asynchronous generation over the following days as ideas continue to surface once people return to their day jobs.

This phase should feel expansive. Convergence comes soon.

How could I use AI here?

Optionally you could feed the AI the context as well as all the HMWs the team have collectively generated and ask it to fill in any useful missing gaps. Caution is advised to closely review the suggestions and validate their relevance. Do not bulk these our for the sake of it as they will be used to hang your hypotheses on in the next step.

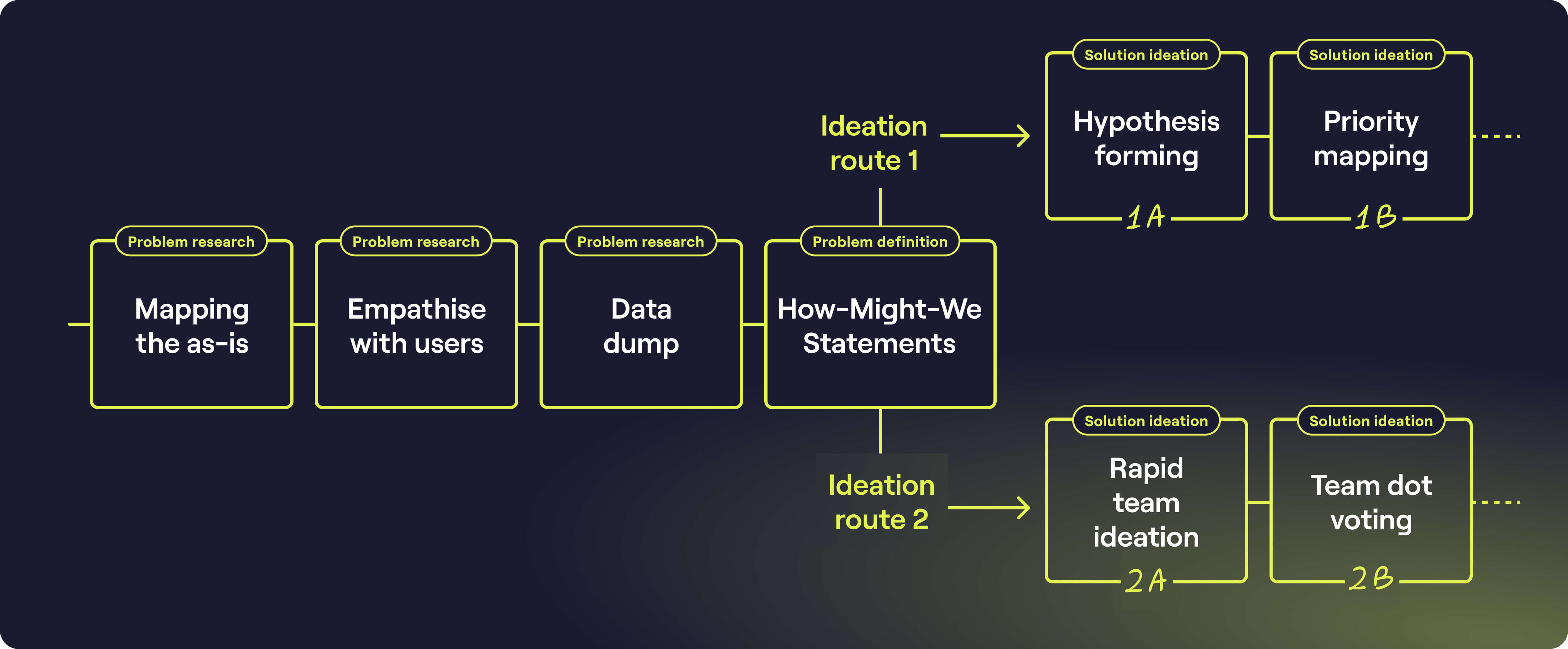

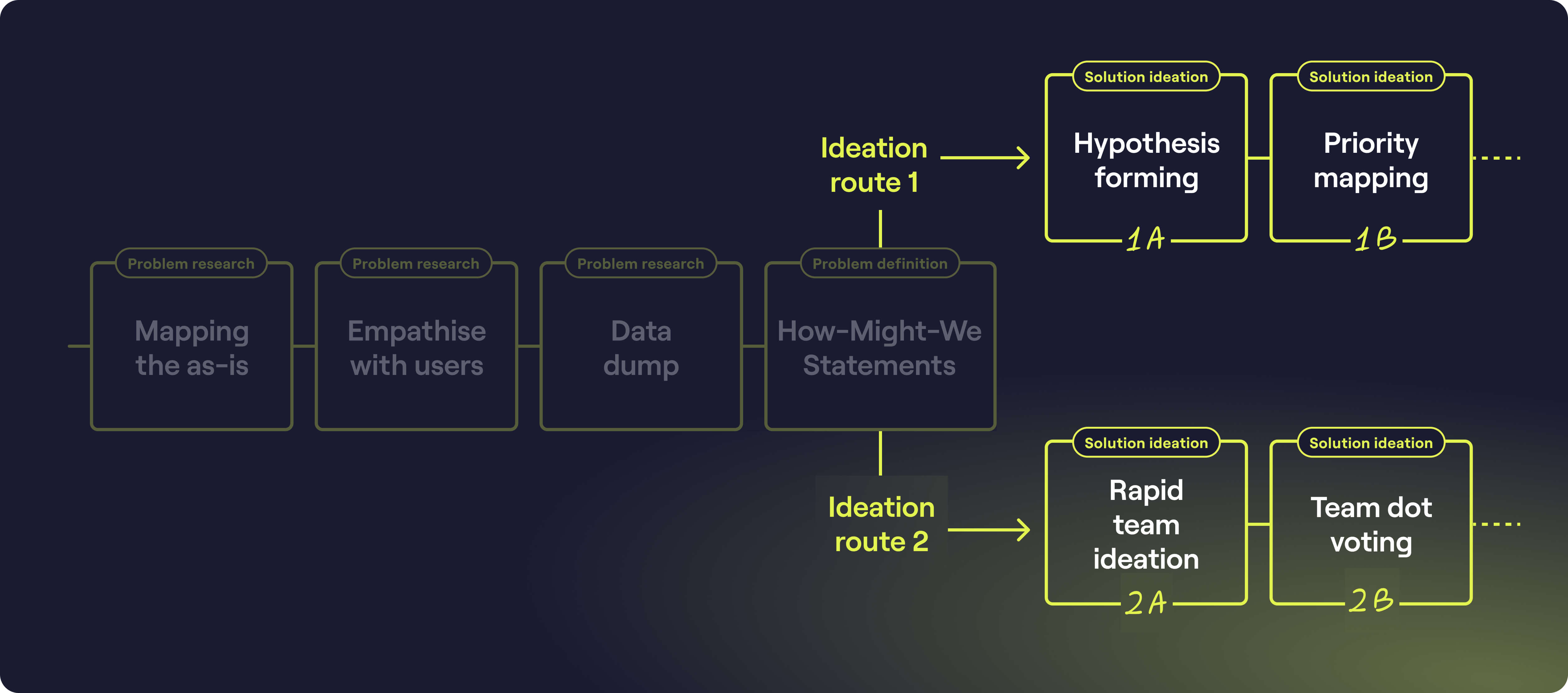

Now to pick a route to your ideation method

At this point there are two routes you can take. This depends on what outputs you think are most useful for your designer / design team for them to progress with design and optional prototype testing.

This decision can come from a simple discussion regarding the options with the relevant stakeholders. There is no right or wrong decision.

Ideation route 1: Hypothesis forming & prioritisation

1A = Hypotheses forming

This is where discovery shifts from exploration to intent.

The How Might We statements define the opportunity space. Hypotheses define the bet.

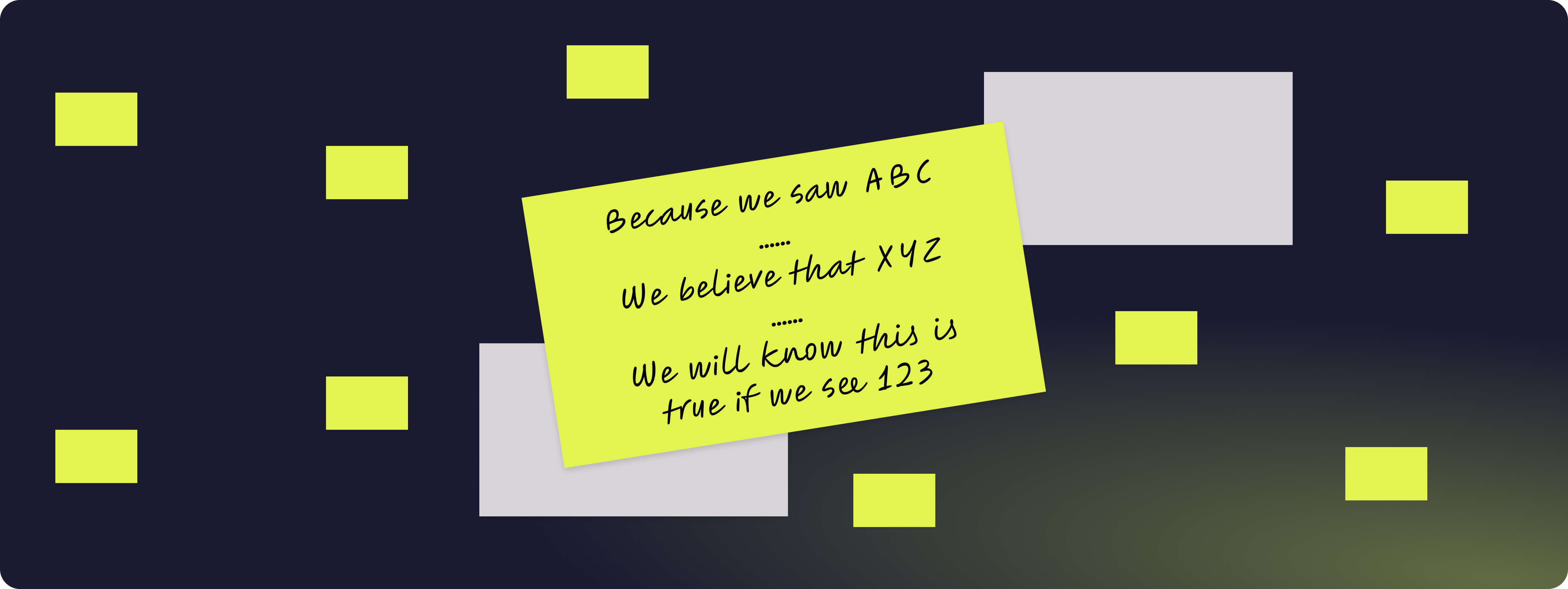

At this stage, we combine structured problem framing with the evidence surfaced in the data phase to articulate testable assumptions. A good hypothesis makes three things explicit: the insight driving it, the change we’re proposing, and the outcome we expect to see.

In practice, hypotheses tend to fall into two categories.

The first is quantitative, where success can be measured numerically. These are appropriate when behavioural shifts, conversion rates, time savings or revenue impact can be tracked directly. The structure is straightforward:

Because we saw [insight], we expect that [idea] will result in [measurable change]. We will measure this using [metric].

The second is qualitative, where validation is based on sentiment, perception or behavioural signals that are harder to reduce to a single metric. In these cases, the framing becomes:

Because we saw [insight], we expect that [idea] will result in [change]. We’ll know this is true if we observe [user feedback or behaviour shift].

The discipline here is critical. Vague hypotheses lead to vague experiments. Sharp hypotheses create focused learning.

This activity works best when facilitated and crowd-sourced from the cross-functional group that has been involved throughout the discovery. Product, data, research, operations and technology perspectives all strengthen the quality of the bets being formed. Even if attendance fluctuates across phases, maintaining continuity where possible is valuable. Previous insights often spark stronger, more grounded hypotheses later in the process.

Once generated, each hypothesis should be shared with the group. I recommend tagging an originator or sponsor to each one. Not for ownership in a territorial sense, but for traceability. As prioritisation begins, it’s easy for ideas to lose context. Maintaining a clear link back to their source preserves intent and makes follow-up discussion easier.

At this point, you should have a visible set of structured bets, grounded in evidence and ready to be evaluated.

Prioritisation now becomes meaningful because you’re choosing between informed options, not opinions.

How could I use AI here?

At this point an AI could be really useful to form further hypotheses. You’d need to build up a strong library of all the artefacts you’ve created so far in order to give broad and deep context. Give the Ai specifics or how you need the hypothesis statements to be formed and see what comes back.

I would recommend doing this in isolation from the group to avoid triggering imposter syndrome. You want to create a safe space for people to be vulnerable with their ideation. Triage any AI answers and add the very highest quality ideas that fill any missing gaps. Be sure to label these as an AI owner in the same fashion as you've labelled the other owners in your team

1B = Prioritisation

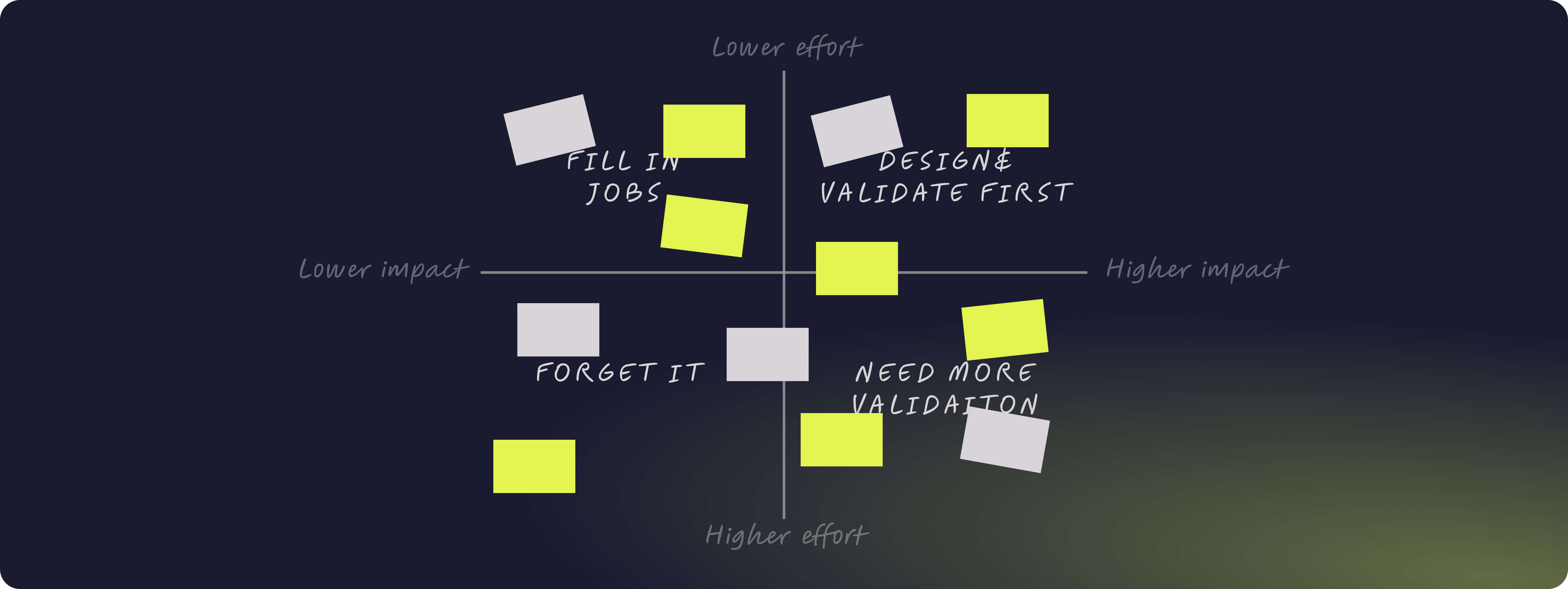

The final stage of this route is about committing to where you place your first bets.

Up to this point, you’ve diverged widely, surfaced insight, formed structured hypotheses and made opportunity visible. Prioritisation is where discipline returns.

A simple effort–impact matrix remains one of the most effective tools for this decision. It’s not sophisticated. It doesn’t need to be. Its power lies in forcing trade-offs into the open.

Effort should be considered broadly. It includes the effort required to validate the hypothesis, design the intervention, implement it, release it, measure it and maintain it. At this stage you won’t know the exact solution, so effort is an informed estimate, a collective judgement based on the expertise in the room.

Impact, meanwhile, is anchored in the value framing established at the start of the discovery. If this hypothesis proves true, how materially would it shift user experience and business performance? Would it reduce friction at a critical moment? Unlock measurable revenue? Remove operational waste? Create a step-change in sentiment?

When plotted against each other, four clear territories emerge.

- High impact and low effort items should be designed and validated first. These are your smartest early bets.

- High impact but high effort ideas require deeper validation before committing serious resource. They may represent transformational opportunities, but they carry greater risk.

- Low impact, low effort items can fill gaps or support incremental improvement, but they should not dominate your roadmap.

- Low impact, high effort initiatives should be challenged rigorously. If the upside is marginal and the cost is significant, they are rarely defensible.

The act of placing hypotheses onto the matrix is as valuable as the outcome. The first placement is the hardest. After that, relative comparison becomes easier as the shape of your opportunity landscape emerges.

You are left with a rationale. A shared, visible logic for why certain bets move forward and others do not. And that clarity is often what discovery is really there to provide. That’s it for route 1!

How could I use AI here?

My advice would be to avoid an AI in the prioritisation phase. It’s a super important part of the process but isn't a particularly big administrative or creative overhead and human-first insights is critical.

Ideation route 2: Rapid team ideation & voting

2A: Rapid team ideation

Before running ideation, I always remind the group that solutions do not need to be digital. Some of the most effective interventions uncovered in discovery are operational, communicative or behavioural shifts rather than product features. The earlier mapping work should have made that clear.

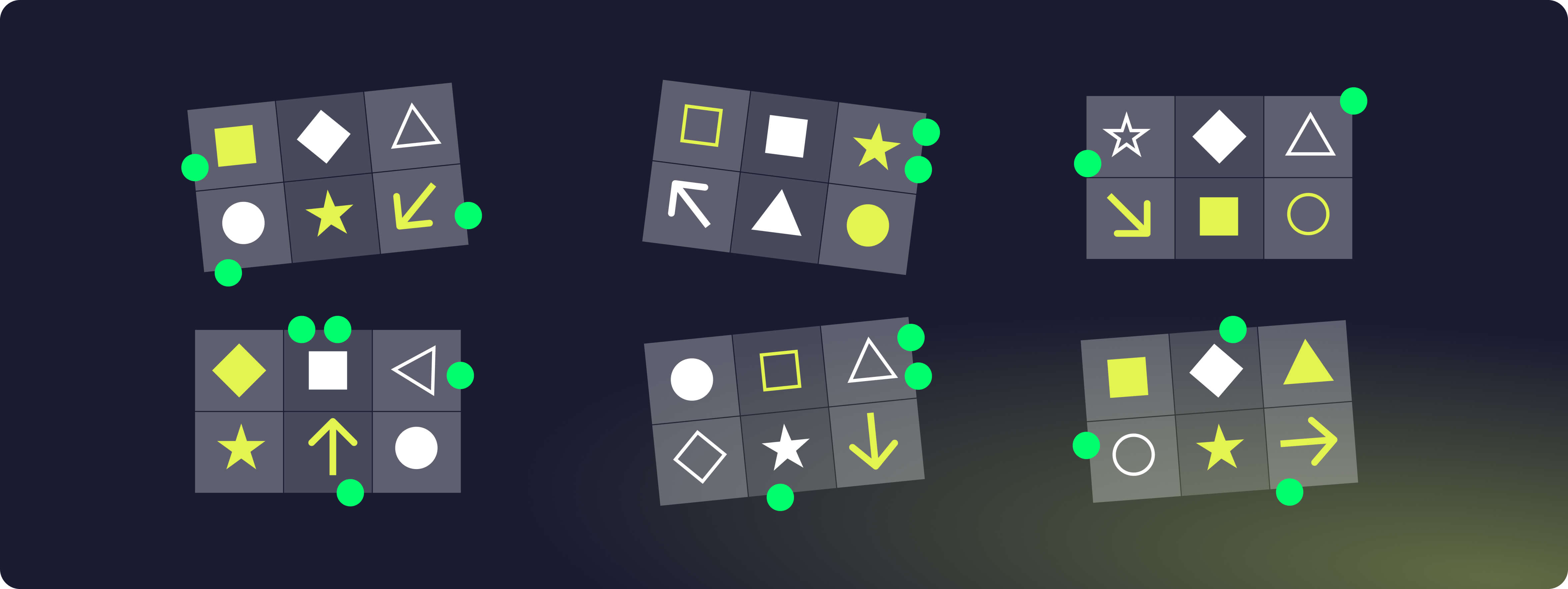

One format I frequently use is Crazy 8s. It’s simple, time-bound and democratises contribution.

The structure is straightforward. First, frame the challenge. You can either anchor everyone to a single How Might We statement, creating focused output, or allow individuals to choose their own. The choice depends on how tightly you want to converge at this stage.

Participants then divide a sheet of paper into eight sections and generate one distinct idea per section, working silently and under strict time pressure. The constraint is intentional. Eight minutes. Eight ideas. No discussion, no refinement.

The speed prevents overthinking and the silence prevents groupthink.

Once complete, participants briefly present their strongest concepts. The discussion that follows often unlocks additional thinking as ideas collide and evolve. In many cases, a second round is valuable. This time asking individuals to develop their strongest four ideas further, drawing inspiration from anything shared in the room. The goal here is momentum rather than polished solutions.

Used well, rapid ideation converts energy into tangible options quickly. It can also increase buy-in, because people see their thinking move directly into the next stage of validation.

The key is knowing when to use it. In politically sensitive environments or highly regulated contexts, structured hypothesis formation may provide more rigour. In collaborative, exploratory settings, rapid ideation can accelerate progress without sacrificing quality.

How could I use AI here?

I would recommend keeping AI out of this step. If you feel like you’ve not managed to generate enough ideas from this step, you could augment them by building context with all your progress to date and asking for further ideas. If you receive anything interesting, make sure you can link the idea back to a source and that you’re happy to defend the idea to use AI in ideation if challenged.

2B: Team dot voting

If rapid ideation has generated a healthy volume of potential solutions, the next question becomes where to focus.

In this alternative route, prioritisation is achieved through structured consensus rather than formal effort–impact evaluation. Dot voting can be an effective mechanism when the group has been deeply involved throughout the discovery and shares enough context to make informed trade-offs.

Each participant is allocated a limited number of votes, typically between five and ten, depending on the size of the group and the volume of ideas. The exact number isn’t critical. What matters is constraint. Scarcity forces people to consider relative value rather than spreading approval evenly.

Votes can be cast physically using sticky dots or paper markers, or digitally within tools such as Miro. The mechanics are secondary. The principle is simple: democratised signal aggregation.

Where possible, I recommend anonymising the vote. Anonymous voting reduces performative alignment and minimises the influence of hierarchy. In in-person settings this can be harder to achieve, but digital canvases make it straightforward. Removing visible ownership often produces more honest prioritisation.

Dot voting works best when:

- The group has shared exposure to the same evidence

- The problem space is well understood

- Political risk is low

- Speed is more valuable than precision

It is less effective in environments where seniority heavily influences opinion, or where the commercial stakes demand explicit effort–impact modelling.

Used appropriately, dot voting creates momentum and collective ownership. It can be a powerful way to translate creative energy into a shortlist of ideas ready for validation.

The trade-off is rigour. Unlike the effort–impact matrix, dot voting does not explicitly model cost or operational complexity. That judgement still needs to be applied before significant investment is made.

That’s it for route 2!

Closing thoughts

Regardless of the route you’ve taken, structured hypothesis formation or rapid ideation. You should now have a prioritised set of ideas ready for validation. That is the real output of discovery.

From here, ownership becomes contextual. If the opportunity sits within user-facing UI or UX, your design team will likely lead prototyping and testing. If it’s service-led, operational or communication-based, validation may sit elsewhere in the organisation. Discovery should create shared clarity across many rather than create dependency on a single discipline.

It’s worth returning to the premise set out at the beginning of this article: opportunities for improvement rarely sit neatly inside a product backlog. They emerge across the full service ecosystem, digital and non-digital, technical and behavioural, frontstage and backstage.

A robust discovery process gives your team confidence that the ideas are moving forward driven by evidence and structured thinking.

When run consistently, this approach can become more than a one-off exercise. If the divergence phase is expansive enough and the convergence disciplined enough, discovery can evolve into a repeatable quarterly rhythm, shaping roadmap direction for a squad, a tribe, or even an entire organisation.

Discovery's goal is to reduce risk, increase clarity, and create the conditions for better bets.

If you’d like Hyperact to help you run a discovery, please contact us at hello@hyperact.co.uk to chat through the specifics of your product or service.